The Deterministic Workflow That Broke When The Model Changed

The workflow was deterministic until the model changed.

It had a graph. It had step state. It had routing rules. Given the same run state, the engine would take the same next step. That was the contract we cared about, because production workflows are not allowed to feel probabilistic just because one step contains an LLM.

Then one customer workflow started failing without a workflow change.

The prompt still asked for structured JSON. The downstream steps still expected the same fields. The runtime still rendered templates and routed the same way. The only meaningful difference was the model selected for the LLM step.

One model returned the JSON as plain JSON. Another returned the same kind of payload wrapped in a Markdown code fence.

That sounds harmless until the next step is not reading prose. It is reading structured state. The template expected a field to exist, tried to render it, and the error surfaced later as:

Template result is undefined

That is a bad error for an operator and a worse error for the runtime. The actual failure happened at the model boundary, but the engine discovered it downstream after the value had already been treated as valid workflow state.

The false assumption

We had made workflow execution deterministic, but we had not made the LLM boundary deterministic.

Those are different things.

Inside the engine, the next step came from explicit state. The LLM step was supposed to add a few structured fields into that state. Once those fields existed, the rest of the workflow could behave like ordinary software.

The mistake was treating "the prompt asks for JSON" as equivalent to "the runtime has structured JSON." The runtime had no proof.

A model can follow the user's intent and still break the program. Returning

JSON inside Markdown is reasonable in a chat transcript. It is wrong at a

runtime boundary where the next step needs decision, reason, or

next_action to exist as fields.

The workflow did not fail because the model was stupid. It failed because the system accepted a soft convention where it needed a hard contract.

Why better prompting was not enough

The first tempting fix was to tighten the prompt. Add more capital letters. Say "return raw JSON only." Add "do not wrap in Markdown." Maybe switch models back and move on.

That can reduce the failure rate. It cannot be the boundary.

A prompt is an instruction to a model. A contract is something the runtime can check. The difference matters most when the workflow is authored once, then run many times under slightly different model versions, prompts, deployments, and input data.

If the runtime needs a field, the runtime should know that before a downstream template tries to use it.

The right question changed from:

"Can we get the model to return the shape we want?"

to:

"What does the workflow require before this step is allowed to finish?"

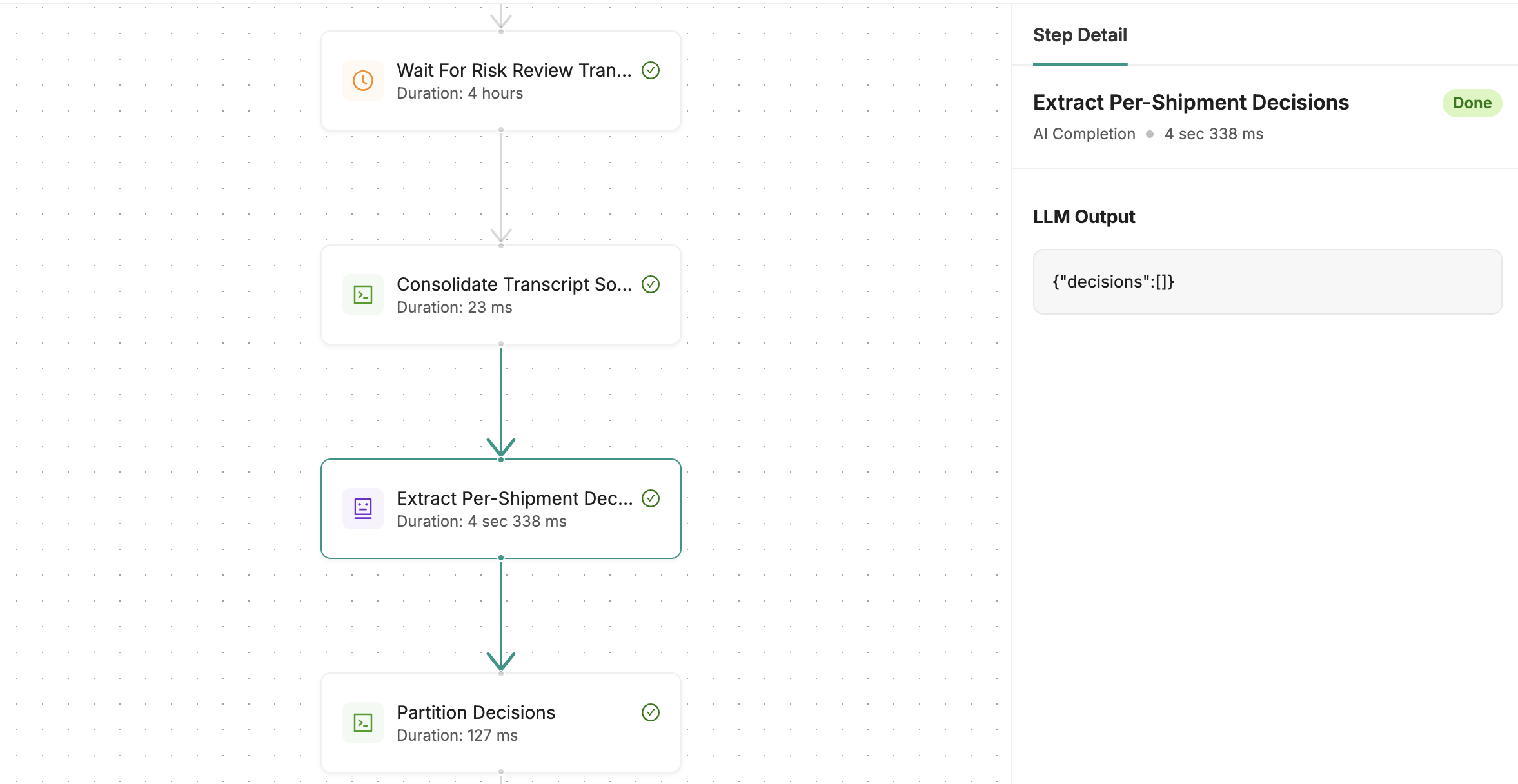

The change we made

We added required outputs for LLM agent steps.

An author can declare the run-state keys an agent step must produce. After the

agent reaches a terminal state, the executor checks whether those keys exist.

It does not decide whether decision is the right decision. It verifies that

decision was written before the next template gets to read it.

For a step that needed a decision, the contract looked like this:

required_outputs:

state_keys:

- key: decision

- key: reason

- key: next_action

When required outputs are missing, the runtime does not immediately continue. It stores retry metadata on the agent state and reinvokes the agent with a specific correction: write the missing keys into run state before completing.

That correction can happen up to two times. If the agent produces the missing state, the workflow continues. If it needs to wait for another event, the step stays waiting instead of being forced through as success or failure.

Defaults are allowed, but only when the workflow author declared them for that

required key. That part matters. A default is not a parser trick; it is a

product decision. Some missing fields can safely become manual_review. Some

cannot. The runtime should not invent that answer.

If retries are exhausted and no declared default exists, the step fails explicitly with the missing keys named in the error.

The new failure is less mysterious:

llm_agent completed without producing required outputs after correction retries: decision

The interesting part is where the error moves. Before, a malformed or unpersisted LLM output could become a confusing template failure later. Afterward, the agent step itself owns the failure.

The boundary, not the model

Required outputs did not make the model deterministic. They made one boundary checkable.

The model can still choose bad wording. It can still pick the wrong classification. It can still write a value that passes presence checks and fails a human review. Required outputs do not solve semantic correctness.

They solve a narrower problem: the rest of the workflow no longer has to discover, several steps later, that the LLM never produced the state it was supposed to produce.

That narrower problem was worth fixing.

In deterministic systems, silent absence is one of the worst failure modes. A missing key can masquerade as a branch decision, a rendering bug, or a broken integration. By the time someone sees the failure, the cause has drifted away from the place that created it.

Putting the contract on the LLM step made the failure local again.

What this did not solve

The guardrail proves that required fields exist. It does not prove that their values are correct.

That leaves real work:

- tests for the workflows that depend on those fields,

- observability for missing outputs, retries, defaults, and hard failures,

- careful defaults that represent safe product behavior,

- schema validation where the value shape matters, not just presence,

- review paths for decisions that should not be trusted just because a key was written.

A parser that strips harmless Markdown fences is still useful when the input is supposed to be JSON. It just does not replace a runtime contract.

But the lesson from this bug was simpler than that.

A deterministic workflow can include an LLM. It just cannot pretend the model's formatting habits are part of the deterministic system. The boundary has to be owned by the runtime.